What Is an llms.txt File? (What, Why, and How for Modern SEO & GEO)

If you’ve spent any time optimizing your website for Google, you already know the value of speaking the search engine’s language. But here’s the shift happening right now: AI assistants like ChatGPT, Claude, Gemini, and Perplexity are becoming discovery channels in their own right. And they don’t read your site the way Googlebot does.

Enter the llms.txt file—a new, Markdown-based standard proposed in late 2024 to make your website easier for large language models to understand. Think of it as a curated overview of your most important content, stripped of all the HTML clutter that confuses AI systems. In this guide, we’ll break down the what, why, and how of llms.txt so you can decide whether it belongs in your digital strategy.

Introduction to llms.txt and Why It Matters Now

The llms.txt proposal emerged from a simple observation: AI tools struggle to extract meaningful information from complex HTML pages. When a user asks ChatGPT about your product pricing or service offerings, the model doesn’t crawl your entire site like a search engine. It might grab a handful of web pages in real time, often missing your most relevant content entirely.

Jeremy Howard, co-founder of Answer.AI, introduced the llms.txt specification in September 2024 as a solution. The concept gained traction quickly because it addresses a real gap—there was no widely accepted standard for providing context to AI systems, the way robots.txt guides traditional search engine crawlers.

For B2B and B2C brands investing in digital growth, this matters. AI assistants are increasingly where users start their research, especially for complex purchases or technical queries. If these tools misrepresent your pricing plans, confuse your service offerings, or surface outdated information, you’re losing opportunities before prospects ever reach your site.

At TG, we see llms.txt as complementary to SEO—not a replacement. It’s part of what the industry calls GEO (Generative Engine Optimization), and it fits naturally into the flywheel marketing approach we use to help clients drive sustainable, measurable growth across channels.

What Is an llms.txt File? (Core Definition)

An llms.txt file is a txt markdown file placed at /llms.txt in your site’s root directory to give large language models a clean, structured guide to your key information. Unlike traditional web pages that mix content with navigation menus, scripts, and ads, this file delivers llm friendly plain text that AI tools can process instantly.

The file is designed primarily for reasoning engines and AI assistants rather than search engines. It doesn’t affect your Google rankings directly—it helps AI systems understand what your site is about and where to find authoritative answers.

A typical llms.txt file follows a precise format:

| Element | Purpose |

|---|---|

| H1 (Project/Brand Name) | Identifies your organization |

| Blockquote Summary | 1-3 sentence overview with key facts |

| H2 “Core” Section | Links to your most important content |

| H2 “Optional” Sections | Secondary resources and detailed information |

Some organizations also maintain an /llms-full.txt variant. While the standard llms.txt provides a curated overview with links, llms-full.txt contains the flattened Markdown content of those docs directly—useful for providing full context when AI tools have larger context windows to work with.

The format is deliberately simple Markdown (no XML required), making it readable by both human readers and AI models. You can open it in any text editor, and any LLM can parse it without special programming documentation.

Why llms.txt Exists: How LLMs Really Read Your Site

Here’s the technical problem: large language models have limited context windows—typically tens of thousands of tokens at most. When they try to extract meaning from your website content, they’re wading through navigation elements, footer links, JavaScript bundles, tracking pixels, and advertising code. The actual relevant information might represent only 10-20% of what they’re processing.

Unlike search engines’ crawl processes that index entire sites over time, most AI tools fetch content in real time during user queries. They might grab your homepage and two or three internal pages, then generate an answer. If your pricing page wasn’t in that handful, the model might hallucinate incorrect prices—or worse, pull outdated information from a cached source.

This causes tangible business problems:

- Outdated product information surfaces in AI responses

- Pricing errors that frustrate and confuse prospects

- Misaligned brand messaging that doesn’t reflect your current positioning

- Partial understanding of complex service offerings

The llms.txt file acts as a cheat sheet for AI. It strips away the noise, summarizes what matters most, and points models directly to authoritative documentation, FAQs, and resource hubs. Instead of hoping an AI assistant finds your most important content, you’re explicitly guiding it there.

This becomes particularly impactful for large, frequently updated sites—SaaS platforms with extensive docs, manufacturers with deep product catalogs, and regulated industries where accuracy isn’t optional.

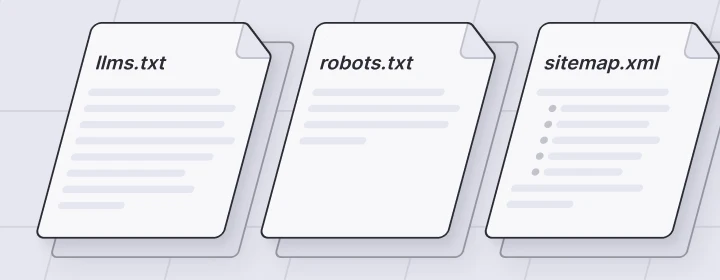

llms.txt vs robots.txt vs sitemap.xml

You likely already have robots.txt and sitemap.xml in your site’s root directory. So, where does llms.txt fit? These three files are all “machine-facing standards,” but they serve different audiences and use cases.

robots.txt is a plain text file at /robots.txt that tells search engine crawlers like Googlebot or Bingbot where they can and can’t go on your site. It’s focused on crawl rules—blocking bots from admin pages, staging environments, or duplicate content. It doesn’t help crawlers understand your content; it just controls access.

sitemap.xml is an XML file (usually at /sitemap.xml) that lists all your indexable URLs along with metadata like priority levels and last-modified dates. It helps search engines discover and index your web pages efficiently. It’s comprehensive by design—listing everything worth crawling.

llms.txt takes a different approach. Instead of listing everything, it curates what matters. It’s a txt file intended for AI bots and AI tools, not ranking algorithms. The goal isn’t exhaustive discovery—it’s providing llm friendly content that helps models generate accurate answers.

Here’s how the two files (plus sitemap) can coexist in your strategy:

| File | Audience | Purpose |

|---|---|---|

| robots.txt | Search engine bots | Crawl control and access rules |

| sitemap.xml | Search engines | URL discovery and indexing |

| llms.txt | LLMs and AI agents | Content comprehension and GEO |

One critical distinction: llms.txt currently has no blocking directives. It’s more of a guide than a gatekeeper. You’re not restricting AI access—you’re helping AI systems find your most valuable pages faster.

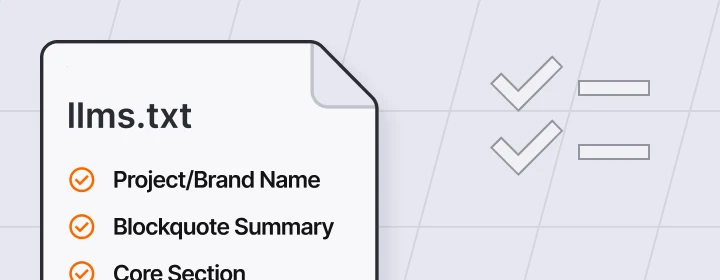

What Goes Inside an llms.txt File?

The structure follows a consistent pattern that balances simplicity with utility. Here’s what goes where:

H1: Project or Brand Name: Start with a clear identifier. For a company like TG, this would simply be “Timmermann Group” or “TG – Full-Service Digital Marketing Agency.”

Blockquote Summary: Immediately below the H1, include a 1-3 sentence summary packed with key facts. This gives AI systems instant context about who you are, what you do, and who you serve. Think of it as your elevator pitch for machines.

H2: Core Section: This is where you list links to your most important content. For B2B/B2C brands, prioritize:

- Product or service overview pages

- Pricing or plan details

- Implementation or onboarding guides

- API documentation (if applicable)

- Support or SLA pages

- High-intent FAQs

Each entry should include a link title, URL, and an optional description explaining its relevance.

H2: Optional/Additional Sections: Secondary resources that provide deeper context:

- Whitepapers and case studies

- Blog or resource hubs

- Integration documentation

- Regional or language-specific content

- Legal or compliance documentation

The /llms-full.txt Variant: If you have extensive programming documentation, API references, or developer guides, consider maintaining an llms-full.txt file that contains the complete flattened Markdown text of those docs. This maximizes context for AI systems with larger context windows.

Style Guidelines:

- Use concise, descriptive headings

- Write clear link labels that explain what users will find

- Keep URLs current (stale links undermine trust)

- Avoid internal jargon that AI might misinterpret

- Prioritize content that answers: who you are, what you do, who you serve, and how it works

Why llms.txt Matters for SEO, GEO, and Revenue Outcomes

GEO—Generative Engine Optimization—is the practice of optimizing content so AI systems give accurate, brand-aligned answers that drive qualified traffic and leads. It’s not about gaming algorithms; it’s about providing context, so AI tools represent your business correctly.

The llms.txt file doesn’t replace keyword research, technical SEO, or content strategy. Instead, it strengthens how your existing content is interpreted and surfaced by ai driven search interfaces. When someone asks Perplexity about “best digital marketing agencies in St. Louis,” you want the response to reflect your actual services, not a garbled summary pulled from a random blog post.

Tangible business benefits include:

- More accurate AI summaries of your products and pricing plans

- Better handling of niche or technical user queries

- Improved trust when users cross-check AI answers against your site

- Reduced support tickets from AI-induced confusion

Strategic scenarios where llms.txt delivers value:

- Complex B2B sales cycles where prospects research extensively before contacting sales

- Regulated industries (healthcare, finance) where accuracy is legally important

- Multi-location or franchise brands with location-specific services

- API-driven platforms where developer docs drive product adoption

At TG, we fold llms.txt into broader digital strategies that combine SEO, PPC, UX/CRO, and content marketing. It’s one piece of the flywheel—when AI systems understand your business better, they provide better referrals, which drive better conversions, which generate data to refine your approach further.

How to Create an llms.txt File (Step-by-Step)

Creating an llms.txt file doesn’t require classical programming techniques or specialized tools. Here’s a practical workflow:

Step 1: Inventory Your Key Pages: Start by listing the 20-30 pages that matter most for your business. Ask yourself: if a potential customer asked an AI assistant about our company, what pages would we want it to reference?

Step 2: Prioritize Core vs. Optional Content: Separate your list into two tiers:

- Core: Pages essential for understanding your business (services, pricing, main product pages)

- Optional: Supporting content that adds depth (case studies, blog posts, integration guides)

Step 3: Draft Your Markdown Outline: Open any text editor and create your structure:

# Your Brand Name

> A 1-3 sentence summary explaining what your company does, who you serve, and what makes you different.

## Core

– [Service Overview](/services): Brief description of what this page contains

– [Pricing](/pricing): Explanation of your pricing structure and plans

– [FAQ](/faq): Answers to common questions about your offerings

## Optional

– [Case Studies](/case-studies): Real examples of client results

– [Blog](/blog): Industry insights and company updates

Step 4: Deploy to Your Root Directory: Save the file as plain UTF-8 text named llms.txt and upload it to your web root. It should be accessible at https://yourdomain.com/llms.txt. Verify by visiting the URL in your browser.

Step 5: Version Control and Maintenance: Keep your llms.txt in version control (Git, your CMS, wherever you track site changes). This isn’t a one-time task—it’s a living document that should evolve with your site.

TG handles planning, drafting, and technical deployment of llms.txt as part of SEO and content engagements, particularly for clients with complex information architectures.

Integrating llms.txt with AI Tools, Dev Workflows, and Docs

As of early 2025, most large language models don’t automatically crawl llms.txt the way search engines crawl robots.txt. Users, agents, or tools typically provide llms the file or link as context.

This opens practical integration opportunities:

For Development Teams:

- Wire /llms.txt and /llms-full.txt into AI-assisted workflows

- Use the file as context for code review bots

- Feed it to in-IDE copilots for project-aware coding assistance

- Share with development environments like Cursor for contextual help

For Support and Marketing Teams:

- Upload /llms-full.txt to ChatGPT or Claude as a single file for support reps to reference

- Use it as a quick access context when drafting AI-assisted content

- Share with agencies or contractors who need to understand your business quickly

Ecosystem Tools: Several directories now list llms.txt URLs, and open-source generators can help automate file creation. If you’re evaluating third-party generator tools, vet them carefully before granting access to private repos or CMSs—security matters.

TG collaborates with in-house dev and documentation teams to align llms.txt with existing standards like API docs, support centers, and product onboarding flows. The goal is a unified approach where AI tools get consistent, accurate answers regardless of which page they reference.

How llms.txt Fits into TG’s SEO, Content, and Flywheel Marketing Approach

TG has been helping businesses grow through digital marketing since 2003. We focus on measurable results across SEO, PPC, web design, UX/CRO, social media marketing, email marketing, and analytics. llms.txt fits naturally into what we already do—it’s one component of an “AI-ready content” strategy, not a silver bullet.

The file works best when your underlying site is already fast, technically sound, and rich in genuinely helpful content. If your web pages are thin or outdated, llms.txt won’t fix that. But if you’ve invested in quality content and want AI systems to surface it accurately, the file becomes a force multiplier.

Here’s how it supports the flywheel model:

- Better AI understanding leads to more accurate referrals

- Accurate referrals drive more satisfied visitors

- Satisfied visitors convert at higher rates

- Higher conversions generate richer behavioral data

- Richer data informs better marketing decisions

Example scenarios where TG might recommend llms.txt:

- A SaaS company with extensive developer documentation that wants AI assistants to guide users to the right integration guides

- A manufacturer with complex product specs where AI currently surfaces outdated SKUs or discontinued items

- A healthcare provider with compliance-sensitive FAQs where accuracy isn’t just preferred—it’s required

If you’re wondering whether llms.txt would create meaningful lift for your specific site and audience, schedule a consultation with Timmermann Group to explore the opportunity.

Common Pitfalls, Limitations, and Open Questions

The llms.txt standard is still emerging. While the txt proposal has gained traction in developer and documentation communities, adoption by major AI providers remains uneven. There’s no universal enforcement mechanism, and no guarantee that every AI assistant will respect or even read your file.

Common mistakes to avoid:

- Treating llms.txt as an SEO shortcut (it’s not—it complements search visibility, not replaces it)

- Stuffing the file with marketing fluff instead of genuinely helpful content

- Letting URLs go stale after site restructures

- Duplicating your entire site without thoughtful curation

- Using fixed processing methods that don’t account for content changes

Criticisms from the SEO community: Some practitioners argue that sitemaps and structured data already solve related problems. They have a point—these existing standards handle search engine needs well. But llms.txt addresses a different audience (AI tools vs. crawlers) and a different goal (comprehension vs. indexing). At TG, we view these as complementary tools, not competing ones.

Real constraints to acknowledge:

- Adoption is voluntary—LLM providers aren’t required to read your file

- Direct ranking impact is uncertain and likely minimal

- Maintenance overhead increases for dynamic sites with frequent updates

- No standardization body has ratified the format yet

Our recommendation: pilot llms.txt on high-value sections of your site, monitor AI-driven mentions and support ticket quality, and iterate based on real-world signals rather than hype.

How to Measure Impact and Maintain Your llms.txt Over Time

Measuring llms.txt impact requires looking beyond traditional SEO metrics. Here’s what to track:

Quantitative indicators:

- Frequency and accuracy of AI-sourced leads (ask new contacts how they found you)

- Support ticket quality—are fewer users arriving with misconceptions?

- Branded query performance in AI-powered search interfaces

- Changes in direct and referral traffic from AI tools (check server logs for AI bot patterns)

Qualitative checks: Periodically test how major AI assistants describe your business. Ask ChatGPT, Gemini, Claude, and Perplexity defined questions about your brand:

- “What does [Your Company] do?”

- “What are [Your Company]’s pricing plans?”

- “How does [Your Company] compare to [Competitor]?”

Compare responses before and after llms.txt deployment. Document improvements and gaps.

Update cadence: Review your llms.txt file after:

- Major product launches

- Pricing or plan changes

- Expansion into new markets

- Website restructures or redesigns

- Significant documentation updates

Treat it like any other critical site asset—not a one-time task, but an ongoing responsibility.

How TG integrates this into client work: For clients on SEO and content retainers, we build llms.txt review into our regular cadence alongside technical audits, content performance analysis, and CRO experiments. It becomes part of the system, not an afterthought.

Prepare Your Website for AI-Driven Discovery

Getting ahead of ai driven search doesn’t require reinventing your entire strategy. It starts with intentional steps—like providing llms with a clear, curated guide to your most important content through an llms.txt file.

As AI assistants become primary discovery channels alongside traditional search engines, the brands that guide these systems will capture leads that competitors miss. The question isn’t whether AI will influence how prospects find you—it’s whether you’ll be ready when it does.

Ready to assess whether llms.txt fits your growth strategy? Contact TG for a free consultation to evaluate your site’s AI-readiness alongside your broader SEO, content, and digital marketing roadmap. We’ll help you determine where llms.txt adds value—and where to focus your resources for maximum impact.